Last Updated on 28th April 2026

Reading Time: 6.3 mins

April 28, 2026

AI tools that generate fake explicit images of real people are now widely accessible and children, young people, and school staff are being targeted. Here is what you need to know and what you can do about it.

Understanding nudification platforms

Nudification platforms, sometimes called deepnude tools, are websites or apps that use artificial intelligence to digitally remove clothing from photographs of real people, creating fake nude, semi-nude or sexualised images without their knowledge or consent. They require very little technical skill or editing expertise as a user simply uploads a photo or video, and the AI generates a false but convincing image at the click of a button. Alternatively, to distance themselves from accountability or to circumvent a platform’s rules, some people send nudification requests into group chats or forums for others to generate the desired image.

Crucially, these tools cannot see through clothes or remove actual clothing. Instead, they generate an entirely artificial body. Despite this, the results can appear highly realistic and believable. Innocent, everyday photos from private messages or social media have been manipulated in this way.

IMPORTANT TO UNDERSTAND

Although nudification tools violate most app store policies, they continue to appear in mainstream stores and online searches. Age checks are often limited to users’ self-declaration, and some websites require no account at all. Children can encounter these tools through ads or social media, without actively searching for them. Between November 2025 and January 2026, Meta removed over 344,000 ads across Facebook and Instagram that attempted to promote nudification apps.

Children, young people and school staff

Anyone can be affected, but the evidence shows a clear pattern: girls and young women are disproportionately targeted. Research from Internet Matters indicates that the overwhelming majority of nude deepfakes online feature women or girls, and nearly all are non-consensual.

In school communities, nudification has been linked to bullying, harassment and child-on-child abuse. Staff are also at risk; incidents have caused anxiety, reputational harm and some teachers have left their jobs after such images have circulated. Schools have reported cases where photos taken from school websites, social media or staff directories have been used to target both pupils and staff.

Beyond the classroom, fabricated images can be used to coerce, pressure or extort young people. Because they appear real, they can silence or manipulate victims, causing fear, shame and lasting emotional distress even when the content is entirely artificial.

Where the UK stands legally

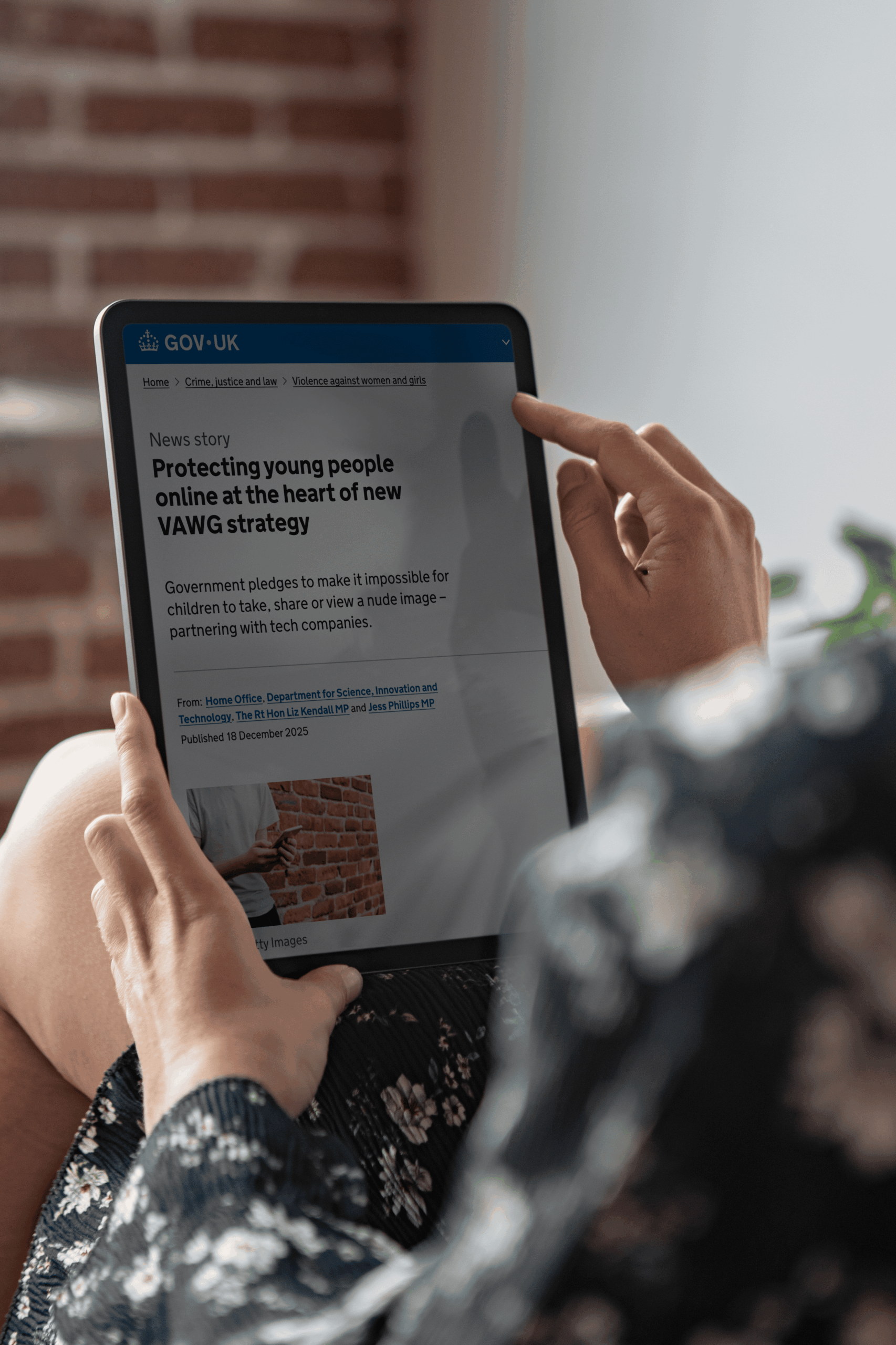

The legal framework is strengthening rapidly. In December 2025, the UK Government announced plans to ban the creation and supply of nudification tools, making it illegal for companies to develop or profit from them, as part of the Violence Against Women and Girls (VAWG) strategy.

This builds on existing protections under the Online Safety Act 2023, which made sharing, or threatening to share, non-consensual intimate images a criminal offence. The Data (Use and Access) Act 2025 went further, criminalising the creation of such images, as well as requesting their creation. This offence was brought into force in January 2026 and designated a priority offence under the Online Safety Act, meaning platforms now have a legal duty to proactively prevent and remove this content – and the non-consensual use of nudification tools is itself a crime.

WHERE CHILDREN ARE INVOLVED

Any sexualised image of a person under 18 is classified as Child Sexual Abuse Material (CSAM) including AI-generated or digitally altered content. Creating, possessing or sharing such imagery is illegal.

The real and lasting harm

The impact on victims can be severe and long-lasting. Being the subject of a fake explicit image, even one that is known to be fabricated, causes significant psychological distress, including anxiety, depression, and in some cases self-harm. Victims often describe feelings of humiliation, powerlessness and profound violation.

“Nudification harms victims, their families and also the young people who create and share these images. Prevention, early intervention and clear guidance are crucial.”

Jim Gamble, CEO, INEQE Safeguarding Group

REPORT REMOVE | A KEY TOOL FOR UNDER-18S

FINAL THOUGHT

Technology moves fast, but the values we teach children about respect, empathy and consent are timeless. Talking to young people about nudification tools is not about frightening them, it is about equipping them to make better choices, to look out for one another, and to know that support is there if something goes wrong.

This article was produced by INEQE Safeguarding Group, incorporating Safer Schools. For more safeguarding resources, training, and school support, visit ineqe.com or our Online Safety Centre: oursafetycentre.co.uk

Join our Safeguarding Hub Newsletter Network

Members of our network receive weekly updates on the trends, risks and threats to children and young people online.